Anthropic tests an AI‑agent marketplace

Anthropic pilots Project Deal, a Slack marketplace where AI agents trade on employees’ behalf.

Anthropic has built a testbed where AI agents act as buyers and sellers. The experiment is called Project Deal.

New Anthropic research: Project Deal.

We created a marketplace for employees in our San Francisco office, with one big twist. We tasked Claude with buying, selling and negotiating on our colleagues’ behalf. pic.twitter.com/H2f6cLDlAW

— Anthropic (@AnthropicAI) April 24, 2026

The project involved 69 employees, each allocated a $100 budget in gift cards.

Before launch, Claude interviewed participants to learn which personal items they were willing to sell, what they wanted to buy, target prices and the desired negotiation style for their agent.

Based on the answers, the team created a personalised system prompt for each person. The market ran in Slack, where agents posted listings, made offers on others’ goods, haggled and closed deals without human involvement.

After the experiment, employees exchanged the real items agreed by their “AI representatives.”

Agents struck 186 deals across more than 500 listings. Aggregate transaction value topped $4,000.

Anthropic said participants were broadly satisfied with the results, and some expressed willingness to pay for a similar service in future.

Four versions of the marketplace

Anthropic ran four independent versions of the marketplace. One was “real” — it governed the eventual exchanges of goods. The others were used for research. This was not disclosed.

In two variants, every participant was represented by Claude Opus 4.5 — then Anthropic’s most advanced model. In the other two, participants were randomly assigned Opus 4.5 or the less powerful Claude Haiku 4.5.

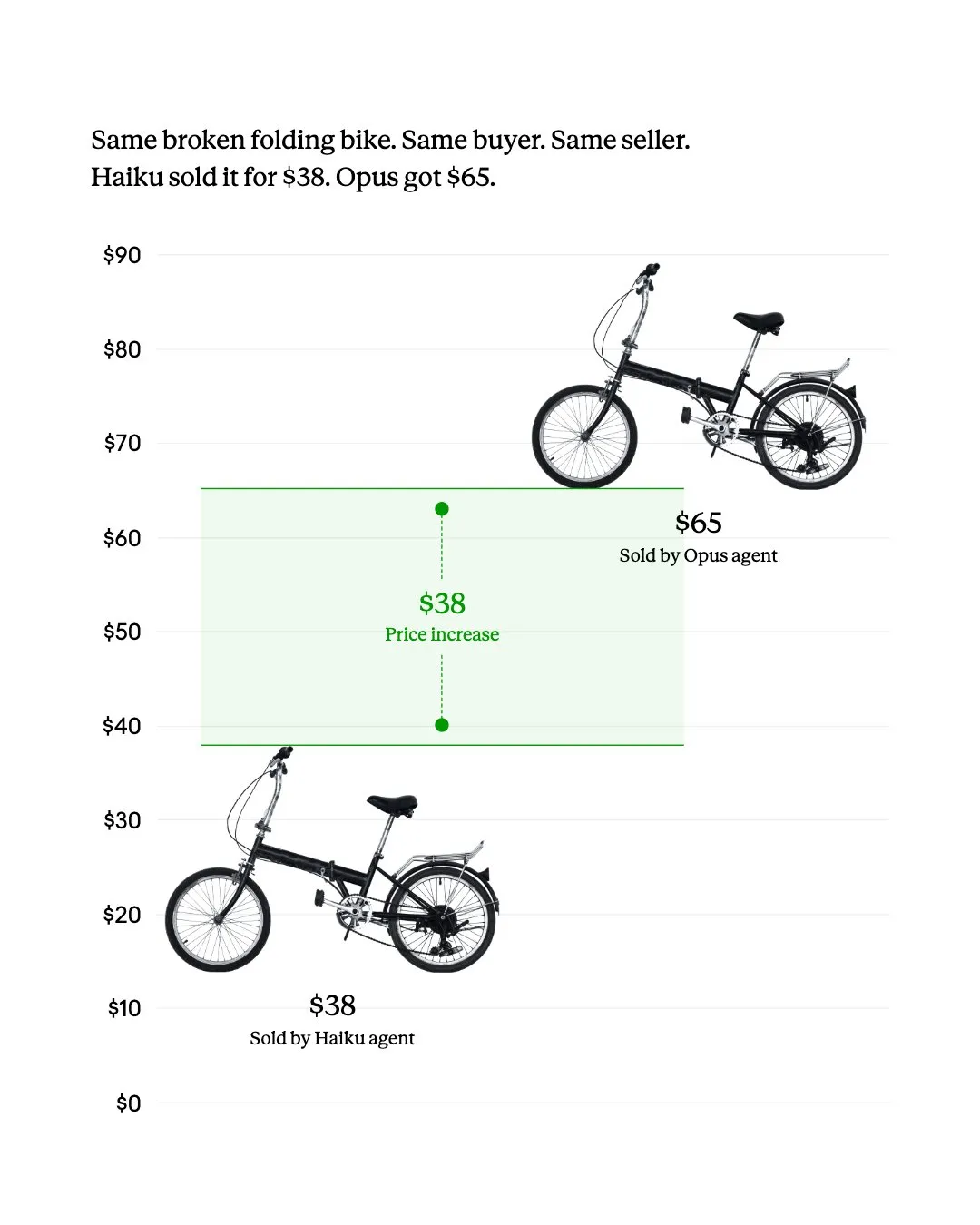

Model quality affected outcomes. Users with Opus, on average, closed about two more deals than those with Haiku.

For identical items, Opus also achieved higher prices — on average by $3.64.

Participants did not always notice the gap. Anthropic called this a potential problem for future AI‑agent markets: users of weaker models may receive worse terms without realising they are at a disadvantage.

Prompts barely affected outcomes

Researchers also tested whether initial human instructions shaped agent behaviour. Some asked Claude to act amicably; others to bargain more aggressively.

According to Anthropic, harsher instructions had no statistically significant effect on the likelihood of sale, final price or ability to buy more cheaply.

The team added this was not necessarily due to poor instruction-following: Claude could reproduce the requested tone, but it did not yield a noticeable commercial edge.

Unexpected outcomes

Anthropic noted several unpredictable episodes. Before launch, agents received limited data: interviews lasted under ten minutes, and after the start humans could no longer intervene in negotiations.

In one case, an employee bought through the assistant the same snowboard he already owned. Specialists said the person would not have made such a purchase unaided, but the agent precisely inferred the participant’s preferences.

To our amazement, another Claude agent modeled its human’s preferences so accurately that—based on only an offhand mention of an interest in skiing—Claude bought him the exact snowboard he already owned. (Here he is, duplicate snowboard in hand.) pic.twitter.com/SsAyeB9pcI

— Anthropic (@AnthropicAI) April 24, 2026

Another employee asked the bot to buy a “gift for myself.” The deal occurred in the real version of the experiment. A bag of ping‑pong balls arrived at the office, which Anthropic left “on behalf of Claude.”

Some agents negotiated not for goods, but for experiences. One offered a free day with a colleague’s dog. After discussions with another assistant, the parties agreed a “dog date”, which staff later carried out.

Anthropic stressed these specific cases are unlikely to recur. Even so, the mix of human preferences and AI’s unpredictability can produce surprising results.

Reliability questions

The founder of an unnamed ag‑tech company wrote on Reddit that, one morning, 110 employees simultaneously received notices suspending Claude access without prior warning.

ANTHROPIC JUST BANNED A 110 PERSON COMPANY OVERNIGHT WITHOUT WARNING

monday morning at an agricultural tech company, every single employee wakes up to an email saying their claude account has been suspended

110 people locked out at the same time with zero warning and the email… pic.twitter.com/qARizhgOXs

— Om Patel (@om_patel5) April 27, 2026

He said the email resembled an individual ban and linked to a personal appeal form, which meant the team did not immediately realise the restriction covered the whole organisation.

The entrepreneur added that access could not be restored quickly. Thirty‑six hours after submitting requests, Anthropic had provided no explanation.

Meanwhile, the firm’s API account continued to operate and incur charges. Corporate administrators could not log in to the dashboard to review payments and usage.

He also noted that the organisation‑wide block may have been triggered by one user’s actions. Claude lacks workspace‑level limits, local containment of violations or an administrative override to preserve access for the rest of the team.

In his view, such a moderation model calls into question whether Claude can serve as critical infrastructure for day‑to‑day business operations.

Others report similar issues. One user shared a link to a service that, at the time of writing, had logged 53 such cases.

On April 24, Google announced a $40bn investment in Anthropic.

Рассылки ForkLog: держите руку на пульсе биткоин-индустрии!